I am starting the rust/python programs in a separate process with BashOperator, and it's stuck on _recv(). Here is a dump of what the scheduler is doing, while it's stuck. We are using airflow because once we sync all the historical data, we run the task once per day each new day. read csv records for each day and transform them with a custom rust parsing toolĪny program written in rust or python that takes a long time to execute will cause this problem.decompress csv.gz files into csv files (disk speed is the bottleneck) (takes about 4 hours to run the first time).download all the archives from via a python script (internet speed is the bottleneck) (takes about 3 hours the first run).This issue occurs even if there is no database being used by my application. Unfortunately the information we have now is not enough to deduce the reason.Īny insight of WHERE the schduler is locked might help with investigating it. Also getting all logs of scheduler and seing if it actually "does" something might help. Is it possible to dump the state of scheduler and dump generaly more of the state of your machine, resouces, sockets, DB locks while it happens (this shoudl be possible with py-spy for example). My best guess is that you have some lock on the database and it makes scheduler wait while running some query.

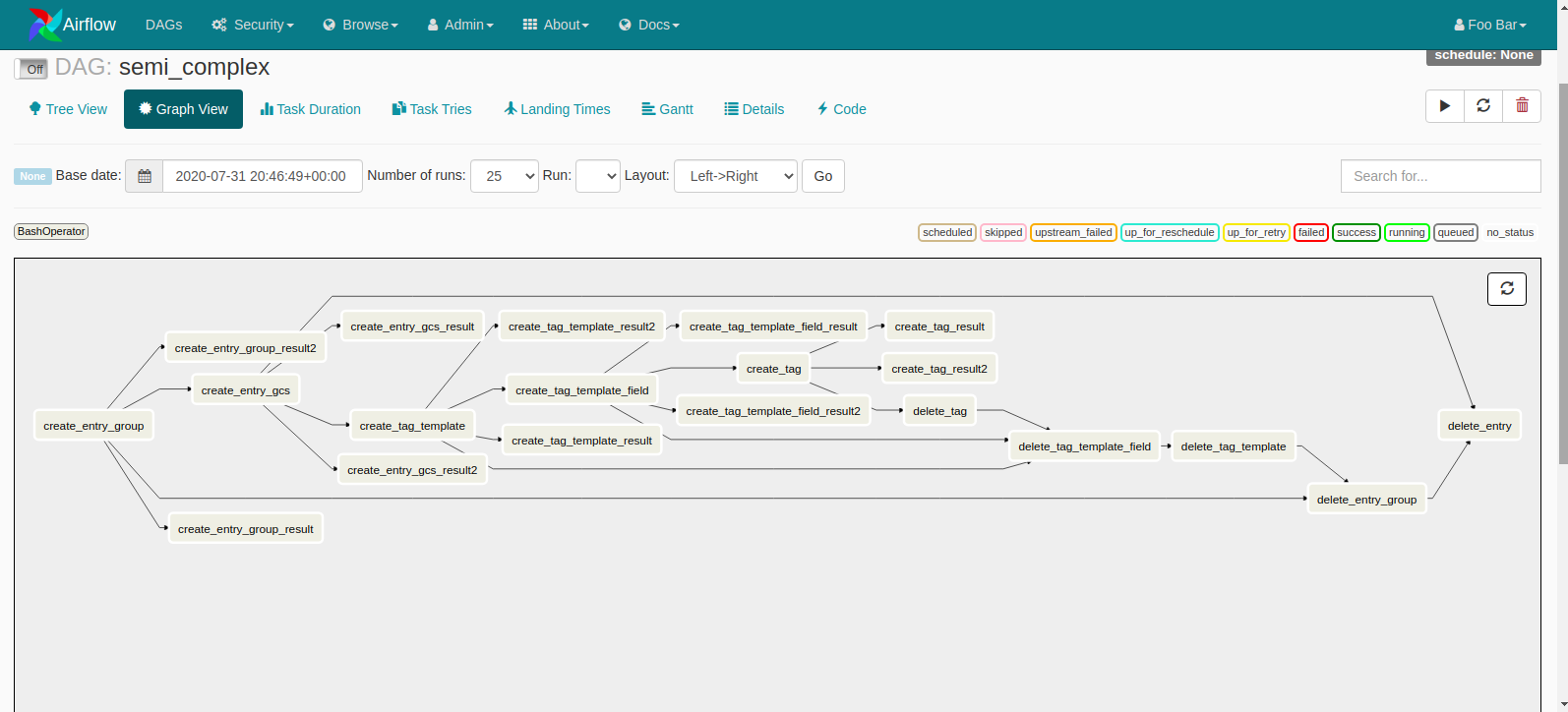

But what it is, it's hard to say from the information provided. This is almost certainly something specific to your deployment (others do not experience it). There must be something in your DAGs or task that simply causes the Airflow scheduler to lock up. It certainly looks like your long running task blocks some resources that blocks scheduler somehow (but there is no indication how it can be blocked). It does not look like like Airflow problem to be honest. Python_path | /opt/airflow:/usr/lib/python39.zip:/usr/lib/python3.9:/usr/lib/python3.9/lib-dynload:/opt/airflow/lib/python3.9/site-packages:/opt/data_workflows/dags:/opt/airflow/config:/oĪpache-airflow-providers-postgres | 2.3.0 System_path | /usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/usr/games:/usr/local/games:/snap/bin Ssh | OpenSSH_8.2p1 Ubuntu-4ubuntu0.3, OpenSSL 1.1.1f Python_location | /opt/airflow/bin/python Sql_alchemy_conn | | /opt/data_workflows/dags Task_logging_handler | _task_handler.FileTaskHandler I agree to follow this project's Code of Conduct.But I expect to be able to do some parallel execution /w LocalExecutor. When the long-running task finishes, the other tasks resume normally. This occurs every time consistently, also on 2.1.2 I run a command with bashoperator (I use it because I have python, C, and rust programs being scheduled by airflow).īash_command='umask 002 & cd /opt/my_code/ & /opt/my_code/venv/bin/python -m path.to.my.python.namespace'Īirflow_database_engine_collation_for_ids:Īirflow_dags_are_paused_at_creation: TrueĪirflow_plugins_folder: "/plugins" I expect my long running BashOperator task to run, but for airflow to have the resources to run other tasks without getting blocked like this. We're only using 3% CPU and 2 GB of memory (out of 64 GB) but the scheduler is unable to run any other simple task at the same time.Ĭurrently only the long task is running, everything else is queued, even thought we have more resources: I run a single BashOperator (for a long running task, we have to download data for 8+ hours initially to download from the rate-limited data source API, then download more each day in small increments). Installed with Virtualenv / ansible - What happened Virtualenv installation Deployment details Linux / Ubuntu Server Versions of Apache Airflow ProvidersĪpache-airflow-providers-postgres=2.3.0 Deployment

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed